Understanding Transformers in 2026

ML & Tech •

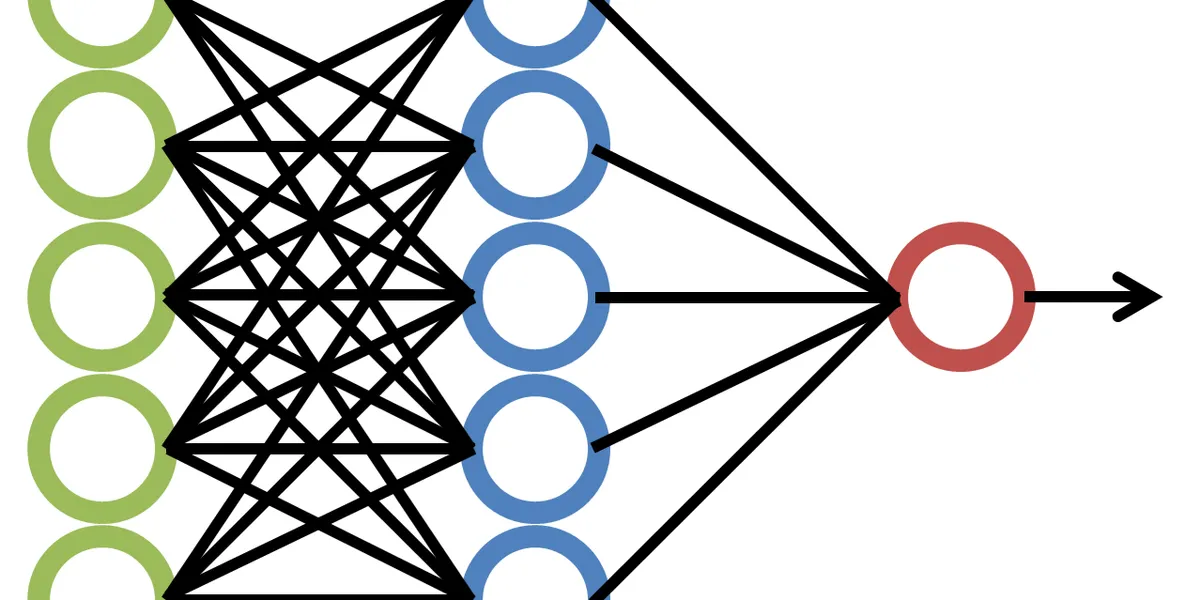

The Core Concept

Self-attention allows a model to weigh the importance of different parts of the input data. Here is a simple Python snippet to illustrate:

import torch

import torch.nn.functional as F

def self_attention(query, key, value):

# Standard scaled dot-product attention

d_k = query.size(-1)

scores = torch.matmul(query, key.transpose(-2, -1)) / (d_k ** 0.5)

p_attn = F.softmax(scores, dim=-1)

return torch.matmul(p_attn, value)

``` <-- Make sure you have these three closing backticks!